Digital Citizen Corner

AI and Deepfakes: When Seeing Is No Longer Believing

What Are Deepfakes?

Deepfakes are pieces of media—such as videos, images, or audio—that are generated or altered using artificial intelligence to make them appear real. AI systems study large amounts of data, including facial expressions, speech patterns, and body movements. Using this information, they can recreate a person’s likeness in ways that make it seem as though they are saying or doing something they never actually did.

At first glance, these videos or images can look completely authentic. For many viewers, it may be difficult to tell the difference between genuine content and something created by artificial intelligence.

Why Deepfakes Matter

The technology behind deepfakes has both promising and concerning uses. In film production, for example, artificial intelligence can help recreate historical footage or improve visual effects. In education and accessibility, AI-generated voices can help people communicate or translate content into different languages.

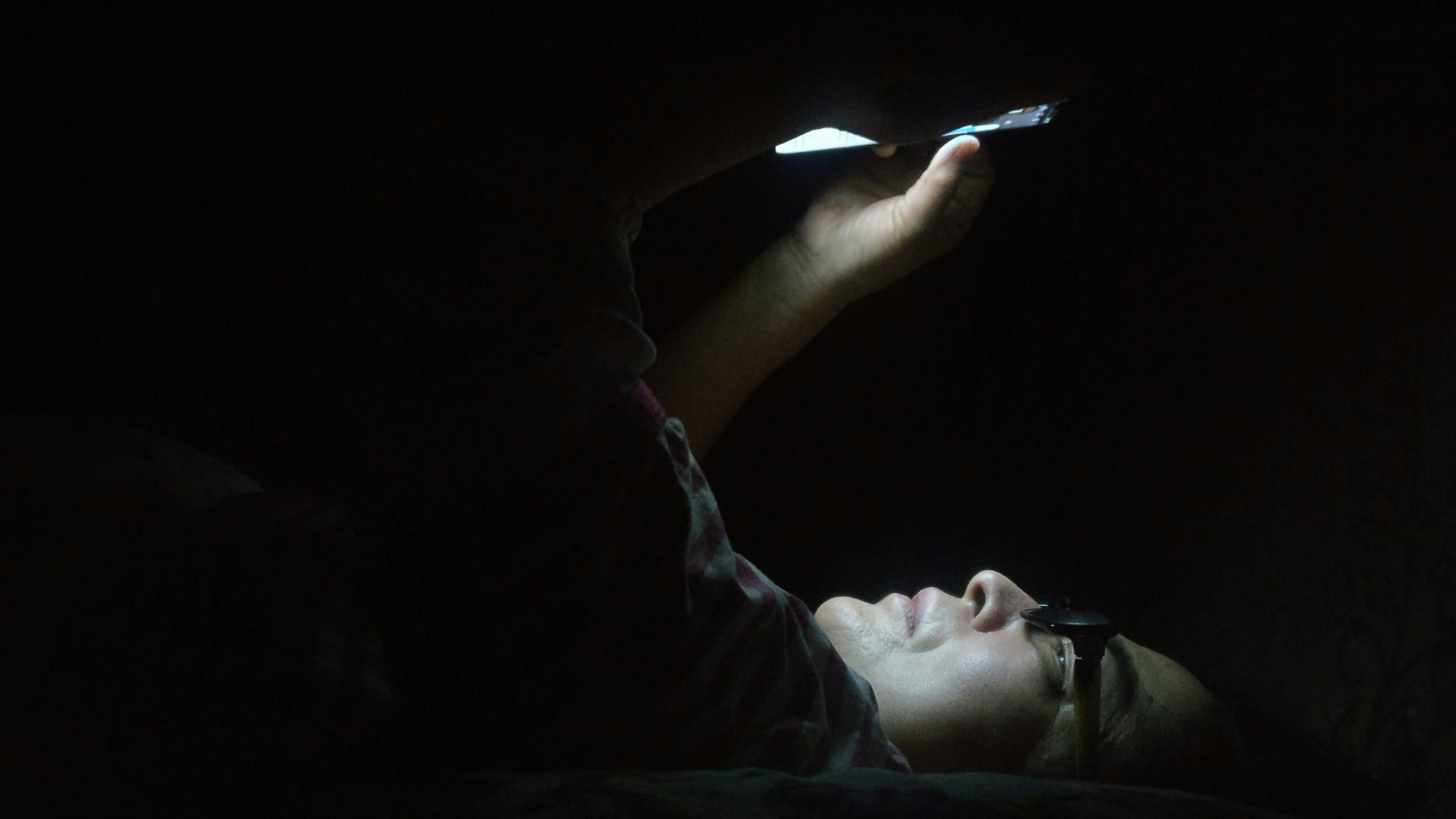

However, deepfakes can also be misused. They may be used to spread misinformation, impersonate individuals, damage reputations, or influence public opinion. When manipulated media spreads quickly online, it can blur the line between fact and fiction.

For this reason, understanding how deepfakes work—and how to approach digital media thoughtfully—is becoming increasingly important for everyone who participates in the online world.

How to Spot a Deepfake

As deepfake technology becomes more advanced, it is not always easy to detect manipulated media. Still, a few careful habits can help people approach online content more critically.

Look closely at facial movements.

In some deepfake videos, facial expressions may appear slightly unnatural. Blinking patterns, lip movements, or eye focus may not perfectly match the speech or emotion in the video.

Pay attention to lighting and shadows.

Lighting on a person’s face may not match the surrounding environment. Small inconsistencies in shadows or reflections can sometimes suggest that an image or video has been altered.

Listen carefully to the voice.

AI-generated voices can sound convincing, but they may include unusual pauses, robotic tones, or speech patterns that feel slightly unnatural.

Check the original source.

If a video seems shocking or unexpected, look for confirmation from reliable news organizations or official sources. If credible outlets are not reporting the same story, it may be a sign that the content is misleading.

Pause before sharing.

One of the most important habits of digital citizenship is taking a moment to verify information before spreading it online. A simple pause can help prevent misinformation from reaching wider audiences.

These small actions can make a meaningful difference in helping people navigate the digital world more responsibly.

A Digital World That Requires Awareness

Artificial intelligence will continue to shape the way information is created and shared. Deepfakes are just one example of how powerful modern technologies have become. While these tools can bring innovation and creativity, they also remind us that the digital world requires thoughtful engagement.

In a time when images, videos, and voices can be recreated by machines, critical thinking becomes more valuable than ever. By questioning what we see, verifying information, and sharing responsibly, digital citizens help protect trust in an increasingly complex online environment.

written by Bryan Senfuma

Bryan is a Digital Rights Advocate, Digital Security Subject Matter Expert, Photographer, and Writer. His articles aim to simplify complex tech issues and inspire readers to make informed, confident choices online. Email: bryantravolla@gmail.com